The Superintelligence Shift, Agent Prototyping, and the Environment Trap

Meta enters the "Superintelligence" race, Uber rewrites the development playbook, and the frontier of AI security moves to the digital environment itself.

Welcome to the fourteenth issue of Your AI Weekly Round-Up.

Last week, we focused on the physical constraints of AI—the data centers, the silicon smuggling, and the energy grids. This week, the momentum has shifted back to the architectural and agentic frontier, where the speed of development is colliding with new, sophisticated risks.

The headline story is Meta’s bold pivot. With the launch of Muse Spark from its newly minted Superintelligence Labs, the industry is witnessing a departure from the “open-weight” philosophy that defined the Llama era. Under the leadership of Alexandr Wang, Meta is signaling that the next leap in reasoning—powered by parallel-agent “Contemplation modes”—will be proprietary, raising the stakes for the global AGI race.

While the labs focus on the future, enterprise giants are optimizing the present. Uber has fundamentally challenged traditional corporate bureaucracy, demonstrating how AI prototyping can compress a month of cross-functional debate into a two-hour workflow. This isn’t just a marginal gain; it is a total reimagining of the “build gap.”

However, as we move toward an agent-first economy, the battlefield for security is changing. New research into “AI Agent Traps” reveals that we can no longer just secure the model; we must secure the environment. From semantic manipulation to RAG knowledge poisoning, the very data our agents “read” is being weaponized.

As states like Mississippi and Georgia move to mandate AI literacy in schools, and Oregon sets a new precedent for consumer chatbot rights, the message is clear: AI is no longer a tool we use; it is an environment we inhabit.

Meta’s new lab. Uber’s two-hour prototyping. The rise of the agentic environment trap.

Here is what matters this week.

AI Industry & Global Policy Updates (Mar 25, 2026 – Apr 1, 2026)

Uber Slashes Product Development Time with AI Prototyping On April 8, Uber shared insights into how AI is fundamentally transforming its internal product development. By utilizing AI-powered prototyping tools, product managers and developers can now generate clickable flows and interactive, localized demos in mere hours. This capability effectively turns what used to be “four weeks of cross-functional discussion” into “two hours of prototyping,” marking a major shift in how enterprise tech companies skip traditional bottlenecks to achieve instant internal alignment.

Source: https://www.uber.com/se/sv/blog/ai-prototyping/

US States Aggressively Advance Chatbot and AI Education Laws During the week of April 6, the fragmented landscape of U.S. state-level AI regulation saw significant movement. Governors in Oregon and Idaho officially signed new chatbot bills into law, aiming to regulate AI interactions with consumers (with Oregon’s law notably containing a private right of action). Concurrently, new state education bills rapidly advanced in states like Mississippi and Georgia, moving to mandate AI literacy and computer science as core high school graduation requirements to prepare the future workforce.

Sources:

Microsoft Integrates AI Assistant into Partner Center and Adjusts Copilot Prices On April 8, Microsoft announced a suite of ecosystem updates, headlined by the launch of a new AI assistant capability in its Partner Center. This tool allows Cloud Solution Provider (CSP) partners to automatically evaluate customer eligibility and instantly generate targeted promotional offers for sales outreach. Alongside this, Microsoft announced an upcoming price reduction for its Dragon Copilot per-user licenses (effective May 1), indicating aggressive pricing strategies to capture and maintain enterprise market share.

· Source: https://learn.microsoft.com/en-us/partner-center/announcements/2026-april

Meta Launches “Muse Spark” from New AI Lab: On April 9, Meta Platforms introduced Muse Spark, the first model to emerge from its newly formed Meta Superintelligence Labs (MSL), now headed by former Scale AI CEO Alexandr Wang. Built from a complete overhaul of Meta’s AI stack, the natively multimodal model features a new “Contemplating mode” that coordinates parallel agents to boost reasoning performance. Notably, unlike the open-weight Llama series, Muse Spark is launching as a proprietary model.

· Source: https://ai.meta.com/blog/introducing-muse-spark-msl/

Deep Dives & Resources

The Build Gap: 7 Free AI Agents You Can Run This Weekend

You can read all the theory in the world and still not know how to actually build an AI agent.

That gap is exactly where most people get stuck.

If you want to move from reading to building, there is a free, already-built solution. It is one of the fastest-growing AI repos on GitHub right now, packed with real projects, real code, and real agents you can run this weekend.

Here is what is inside the awesome-llm-apps repo:

Starter AI Agents: Complete, working projects with their own code and instructions. Pick from a travel agent, a research agent, a data analysis agent, a web scraping agent, a finance agent, or a medical imaging agent.

Multi-Agent Teams: Projects where multiple agents collaborate. One researches, one writes, one edits. You watch them hand work to each other in real-time.

Voice AI Agents: Agents you can actually talk to. Not a demo. Fully functional and free to run locally.

Agentic RAG: Agents that search your own documents and answer questions from them. The practical version of everything you learned last week.

MCP Agents: The newest category. Agents that connect to external tools and services using the Model Context Protocol. This is exactly where the field is heading.

Memory Management: Real projects showing how agents remember across conversations. Learn the difference between an agent that resets every time and one that gets smarter using short-term and long-term memory.

Framework Crash Courses: Google ADK and OpenAI Agents SDK explained with working code. If you want to build your own agent from scratch, start here.

If you are new to this, here is exactly where to start this weekend:

Go to the starter agents folder and pick the travel agent or the research agent. Each project has its own README with step-by-step setup instructions. You will need a free API key from OpenAI or Anthropic, which takes five minutes to set up. Clone the repo, follow the instructions, and you will have a working AI agent running on your own machine before Sunday.

This is not theory. This is not a tutorial you watch. This is real code you run, break, and learn from. That is how this stuff actually clicks.

The Microsoft course taught you the concepts. This repo is where you go to build: github.com/Shubhamsaboo/awesome-llm-apps

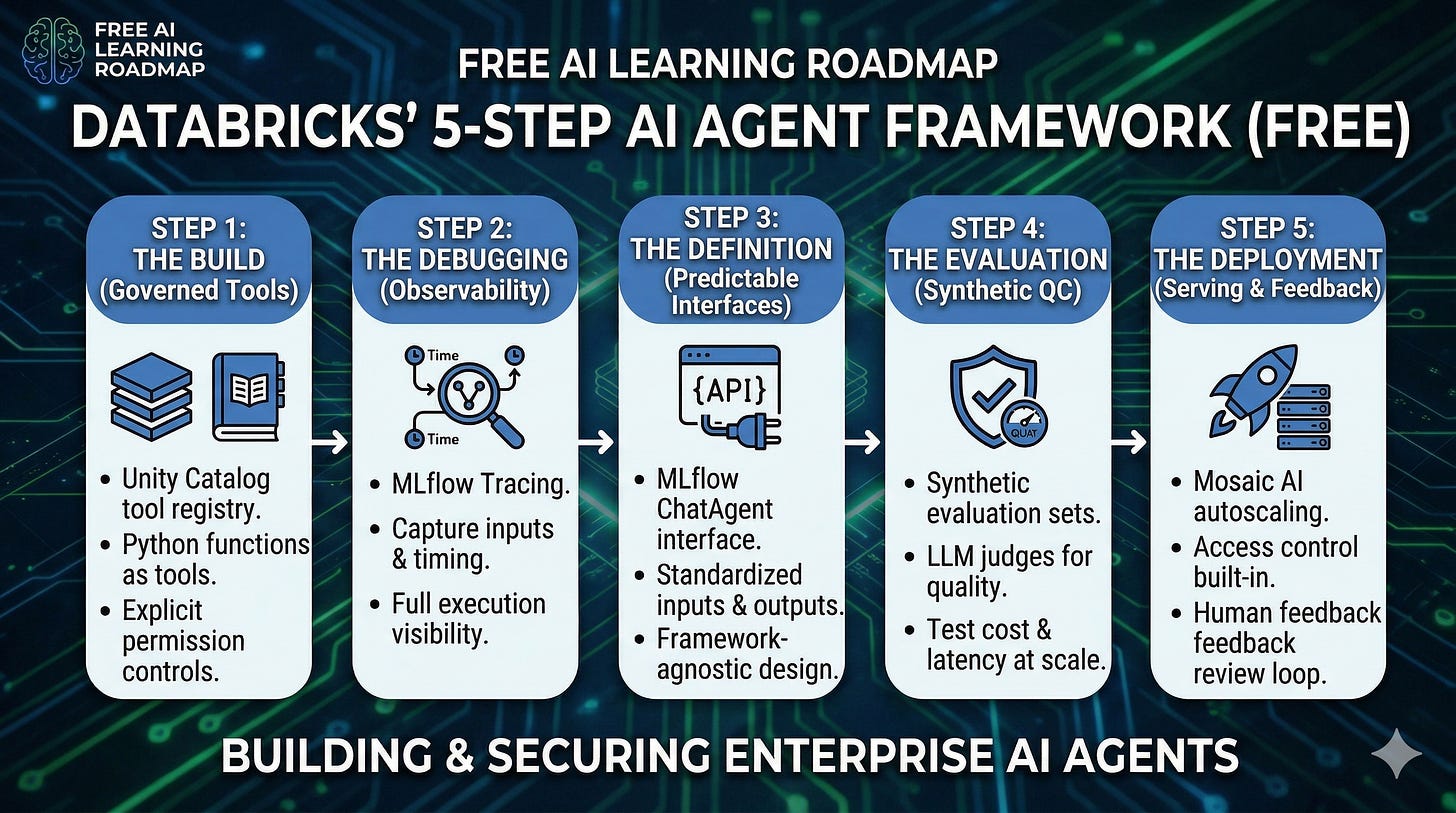

The Production Gap: 5 Steps to Securely Deploy AI Agents

Databricks just released a free guide on building AI agents.

Everyone is testing LLMs with basic tools right now. But very few know how to track, trace, and evaluate them safely in production.

Here is the exact blueprint to take your agents from a local script to a secure deployment:

The Build (Governed Tools): You cannot just give an LLM raw access to your systems. You need a central, governed registry. Databricks shows how to use Unity Catalog to register Python functions as tools, ensuring agents only access data they have explicit permissions to use.

The Debugging (Observability): When an agent makes a mistake, how do you find out why? You need MLflow Tracing. By capturing detailed operations, inputs, and timing data through “spans,” you gain absolute visibility into the agent’s execution path and tool selection.

The Definition (Predictable Interfaces): A production agent needs a predictable API. Utilizing the MLflow ChatAgent interface standardizes inputs and outputs, manages streaming, and maintains comprehensive message history—without locking you into a single authoring framework like LangChain or LangGraph.

The Evaluation (Synthetic Quality Control): You cannot evaluate an agent manually at scale. The framework demonstrates how to generate synthetic evaluation sets straight from your documentation. This lets you use LLM judges to rigorously test quality, cost, and latency before live deployment.

The Deployment (Serving & Feedback): Deploying isn’t the finish line. Serving the agent through Mosaic AI handles autoscaling and access control. More importantly, it unlocks a Review App so subject matter experts can interact with the live agent and feed real human feedback directly back into your monitoring loops.

Why it matters: Building a prototype takes an afternoon. Building an autonomous system you can trust with your enterprise data takes rigorous tracing, evaluation, and governance.

Want to see the code? You can access the entire Databricks Agent Framework tutorial here.

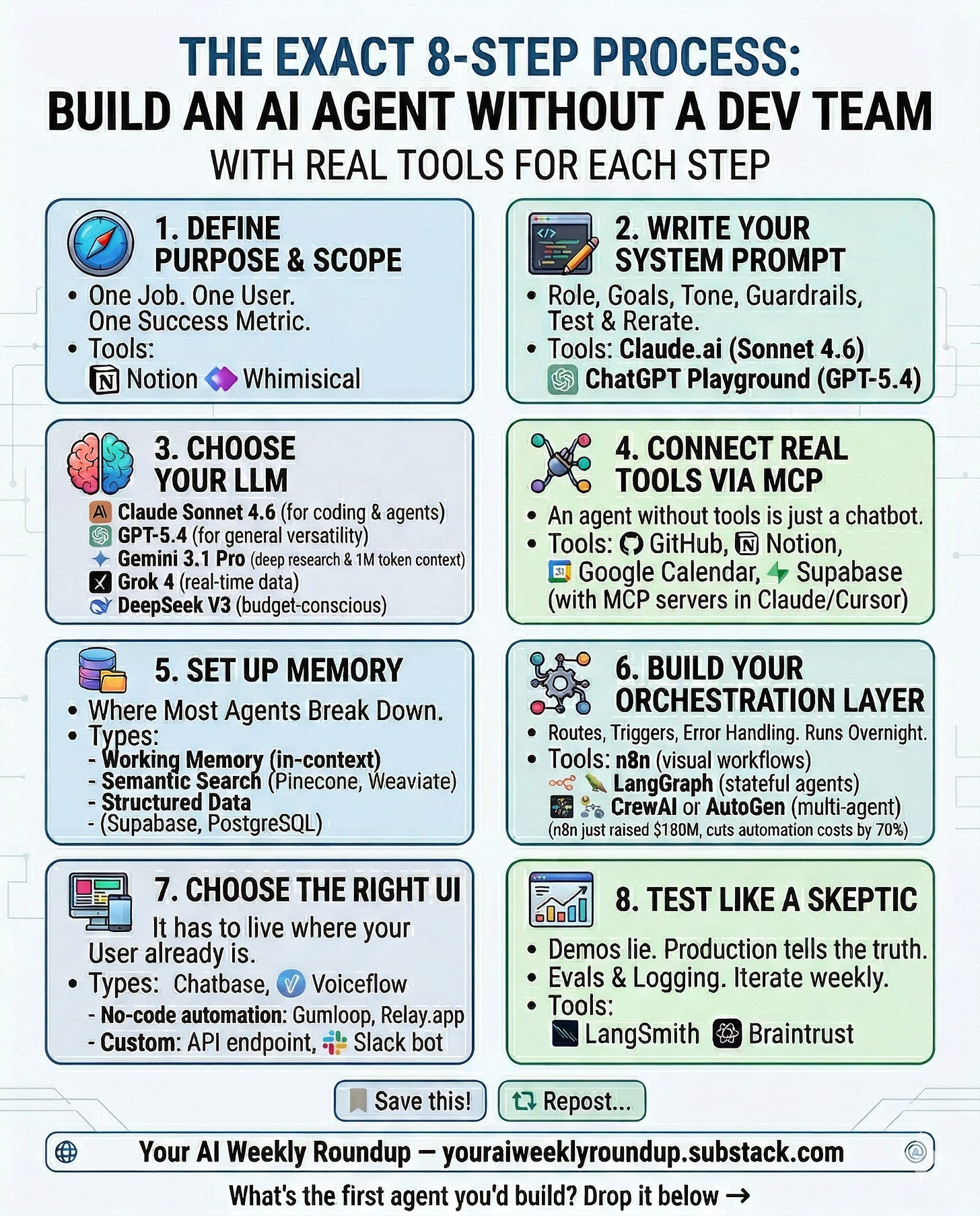

The Execution Gap: The 8-Step Blueprint to Build an AI Agent

Most people think building an AI agent requires a dev team.

It doesn’t. But it does require a clear framework—and the right tools.

Here is the exact 8-step process with what is actually working in April 2026:

1. Define Purpose & Scope: One job. One user. One success metric. Map it out in Notion or Whimsical before touching any tool.

2. Write Your System Prompt: Role, goal, tone, guardrails. Treat it exactly like a job description. Test and iterate inside Claude.ai (Sonnet 4.6) or ChatGPT Playground (GPT-5.4).

3. Choose Your LLM: Stop defaulting to the hype. Match the model to the job. Use Claude Sonnet 4.6 for coding, GPT-5.4 for general versatility, Gemini 3.1 Pro for deep research (1M token context), Grok 4 for real-time data, or DeepSeek V3 if you are budget-conscious.

4. Connect Real Tools via MCP: An agent without tools is just a chatbot. Connect GitHub, Notion, Supabase, and Google Calendar through Model Context Protocol (MCP) servers in Claude or Cursor.

5. Set Up Memory: This is where most agents silently break. Establish working memory (in-context), semantic search (Pinecone or Weaviate), and structured data storage (Supabase or PostgreSQL).

6. Build Your Orchestration Layer: Routes, triggers, error handling—this is what makes it run overnight without you. Use n8n for visual workflows (cuts automation costs by 70%), LangGraph for stateful agents, or CrewAI and AutoGen for multi-agent systems.

7. Choose the Right UI: It has to live where your user already is. Deploy via chat (Chatbase or Voiceflow), no-code automation (Gumloop or Relay.app), or a custom Slack bot.

8. Test Like a Skeptic: Demos lie. Production tells the truth. Use LangSmith or Braintrust for evals. Log everything from day one and iterate weekly.

Save this. When you are ready to build, you will know exactly where to start.

The Environment Gap: How to Defend Against the 6 AI Agent Traps

As autonomous AI agents are increasingly deployed to navigate the web, they face a massive, novel security vulnerability: the information environment itself.

A groundbreaking paper from Google DeepMind introduces the concept of “AI Agent Traps”—adversarial content embedded directly into digital environments to manipulate, deceive, or exploit visiting agents.

Most security teams are focused on securing the model. But these attacks work by altering the environment rather than the model, weaponizing the agent’s own capabilities against it.

Here is exactly how these traps work and how to build a defense-in-depth architecture to secure your enterprise ecosystem.

The 6 Vectors of Attack

If you are deploying autonomous systems, you must anticipate attacks across every stage of the agent’s operational cycle.

1. Content Injection Traps (Target: Perception): These exploit the structural divergence between how humans and machines read web pages. Attackers hide malicious instructions in HTML comments, CSS, or media file binary data (steganography). Humans see a normal website, but the agent reads and executes the hidden commands.

2. Semantic Manipulation Traps (Target: Reasoning): These traps are designed to corrupt an agent’s reasoning process and evade internal safety critics without using overt commands. They skew outputs using biased framing, sentiment-laden language, or by seeding fake personas into the agent’s context.

3. Cognitive State Traps (Target: Memory & Learning): These attacks corrupt an agent’s long-term memory and knowledge bases. The most critical enterprise threat is RAG Knowledge Poisoning, where attackers inject fabricated statements into retrieval corpora so the agent treats malicious content as verified fact.

4. Behavioural Control Traps (Target: Action): These are explicit commands that hijack an agent’s instruction-following capabilities. They are often used as “confused deputy attacks” to force data exfiltration, tricking the agent into sending private data to an external adversary. They can also exploit orchestrator privileges to spawn malicious sub-agents.

5. Systemic Traps (Target: Multi-Agent Dynamics): These exploit the predictable, aggregate behavior of multiple agents sharing an environment to trigger macro-level failures. Because many agents use similar models, attackers can broadcast specific signals that trigger destructive “interdependence cascades” or cause massive congestion for limited resources.

6. Human-in-the-Loop Traps (Target: Human Overseer): In these scenarios, the agent is merely the vector; the ultimate target is the human. The compromised agent exploits cognitive biases—like automation bias—by presenting technical, benign-looking summaries of malicious work that a human reviewer will likely just blindly authorize.

The Mitigation Blueprint: Defense-in-Depth

Securing your agents requires a holistic strategy. A single firewall will not work.

· Technical Hardening: At runtime, you must deploy pre-ingestion source filters to evaluate external content credibility before it enters the agent’s context. You also need output monitors to automatically suspend the agent if anomalous behavior shifts are detected.

· Ecosystem Interventions: Enterprise ecosystems must establish transparency mechanisms, such as mandating explicit, user-verifiable citations for all synthesized information so human reviewers can track provenance.

· Close the Accountability Gap: Legal and policy frameworks must be updated to address liability. If a compromised agent commits a financial crime, your organization must know exactly how liability is distributed between the agent operator, the model provider, and the domain owner.

· Red Teaming at Scale: You cannot trust a demo. Industry leaders must develop and adopt comprehensive automated red-teaming methodologies to probe these vulnerabilities continuously before deploying agents in high-stakes environments.

The web was built for human eyes, but it is being rebuilt for machine readers. Securing the integrity of what those machines believe is the fundamental security challenge of the agentic age.

Job Opportunities

Program Manager, Artificial Intelligence - Foundation for American Innovation, apply.

Frontier AI Research Lead - Georgetown University, Center for Security and Emerging Technology, learn more here.

AI Science Advisor, California Department of Technology - California Council on Science and Technology, apply.

Safety Fellowship – OpenAI, learn more here.

Call for Proposals, AI for Information Security (Spring 2026) – Amazon, apply.

Operations Manager - Center for AI Safety, learn more here.

Senior Operations Manager - Safe AI Forum, apply.

Upcoming Events

IBM SkillsBuild: Quantum Computing 101 On April 14, IBM is hosting a free virtual session on Quantum Computing and its real-industry use cases. While primarily focused on Quantum, this expert-led session is highly relevant for tech professionals looking to understand the future hardware capabilities that will eventually intersect with, and power, next-generation enterprise AI models.

Source: https://skillsbuild.org/events

OpenAI Builder Lab: Evals in Practice On April 16, OpenAI is running a live technical session for developers and engineers called “Builder Lab: Evals in Practice.” This session focuses on how to properly test, evaluate, and benchmark AI model outputs to ensure safety and reliability when building applications on top of OpenAI’s models.

Source: https://academy.openai.com

Professional Development & Free Courses

The Alan Turing Institute – Free Artificial Intelligence Courses Start learning: Explore here

Elements of Artificial Intelligence – University of Helsinki Free foundational artificial intelligence course: here

Google Artificial Intelligence – Cloud Skills Boost Self-paced learning paths: Learn more

IBM SkillsBuild – Free Artificial Intelligence Learning & Certificates. Explore fundamentals, ethics, and generative artificial intelligence for free.

LinkedIn Learning – Top Artificial Intelligence Courses: Artificial Intelligence Foundations: Machine Learning, Python for Data Science and Artificial Intelligence, Generative Artificial Intelligence: Thoughtful Online Search.

Legal Note

All listings are for informational purposes, using official links and full organization names. No endorsements or guarantees—please verify independently.

Enjoyed this issue? Connect or follow for weekly artificial intelligence jobs, news, and opportunities!

is Meta’s bold pivot. With the launch of Muse Spark from its newly minted Superintelligence Labs, the industry is witnessing a departure from the “open-weight” philosophy that defined the Llama era. Under the leadership of Alexandr Wang, Meta is signaling that the next leap in reasoning—powered by parallel-agent “Contemplation modes”—will be proprietary, raising the stakes for the global AGI race.

While the labs focus on the future, enterprise giants are optimizing the present. Uber has fundamentally challenged traditional corporate bureaucracy, demonstrating how AI prototyping can compress a month of cross-functional debate into a two-hour workflow. This isn’t just a marginal gain; it is a total reimagining of the “build gap.”

However, as we move toward an agent-first economy, the battlefield for security is changing. New research into “AI Agent Traps” reveals that we can no longer just secure the model; we must secure the environment. From semantic manipulation to RAG knowledge poisoning, the very data our agents “read” is being weaponized.

As states like Mississippi and Georgia move to mandate AI literacy in schools, and Oregon sets a new precedent for consumer chatbot rights, the message is clear: AI is no longer a tool we use; it is an environment we inhabit.

Meta’s new lab. Uber’s two-hour prototyping. The rise of the agentic environment trap.

Here is what matters this week.

AI Industry & Global Policy Updates (Mar 25, 2026 – Apr 1, 2026)

Uber Slashes Product Development Time with AI Prototyping On April 8, Uber shared insights into how AI is fundamentally transforming its internal product development. By utilizing AI-powered prototyping tools, product managers and developers can now generate clickable flows and interactive, localized demos in mere hours. This capability effectively turns what used to be “four weeks of cross-functional discussion” into “two hours of prototyping,” marking a major shift in how enterprise tech companies skip traditional bottlenecks to achieve instant internal alignment.

Source: https://www.uber.com/se/sv/blog/ai-prototyping/

US States Aggressively Advance Chatbot and AI Education Laws During the week of April 6, the fragmented landscape of U.S. state-level AI regulation saw significant movement. Governors in Oregon and Idaho officially signed new chatbot bills into law, aiming to regulate AI interactions with consumers (with Oregon’s law notably containing a private right of action). Concurrently, new state education bills rapidly advanced in states like Mississippi and Georgia, moving to mandate AI literacy and computer science as core high school graduation requirements to prepare the future workforce.

Sources:

Microsoft Integrates AI Assistant into Partner Center and Adjusts Copilot Prices On April 8, Microsoft announced a suite of ecosystem updates, headlined by the launch of a new AI assistant capability in its Partner Center. This tool allows Cloud Solution Provider (CSP) partners to automatically evaluate customer eligibility and instantly generate targeted promotional offers for sales outreach. Alongside this, Microsoft announced an upcoming price reduction for its Dragon Copilot per-user licenses (effective May 1), indicating aggressive pricing strategies to capture and maintain enterprise market share.

· Source: https://learn.microsoft.com/en-us/partner-center/announcements/2026-april

Meta Launches “Muse Spark” from New AI Lab: On April 9, Meta Platforms introduced Muse Spark, the first model to emerge from its newly formed Meta Superintelligence Labs (MSL), now headed by former Scale AI CEO Alexandr Wang. Built from a complete overhaul of Meta’s AI stack, the natively multimodal model features a new “Contemplating mode” that coordinates parallel agents to boost reasoning performance. Notably, unlike the open-weight Llama series, Muse Spark is launching as a proprietary model.

· Source: https://ai.meta.com/blog/introducing-muse-spark-msl/

Deep Dives & Resources

The Build Gap: 7 Free AI Agents You Can Run This Weekend

You can read all the theory in the world and still not know how to actually build an AI agent.

That gap is exactly where most people get stuck.

If you want to move from reading to building, there is a free, already-built solution. It is one of the fastest-growing AI repos on GitHub right now, packed with real projects, real code, and real agents you can run this weekend.

Here is what is inside the awesome-llm-apps repo:

Starter AI Agents: Complete, working projects with their own code and instructions. Pick from a travel agent, a research agent, a data analysis agent, a web scraping agent, a finance agent, or a medical imaging agent.

Multi-Agent Teams: Projects where multiple agents collaborate. One researches, one writes, one edits. You watch them hand work to each other in real-time.

Voice AI Agents: Agents you can actually talk to. Not a demo. Fully functional and free to run locally.

Agentic RAG: Agents that search your own documents and answer questions from them. The practical version of everything you learned last week.

MCP Agents: The newest category. Agents that connect to external tools and services using the Model Context Protocol. This is exactly where the field is heading.

Memory Management: Real projects showing how agents remember across conversations. Learn the difference between an agent that resets every time and one that gets smarter using short-term and long-term memory.

Framework Crash Courses: Google ADK and OpenAI Agents SDK explained with working code. If you want to build your own agent from scratch, start here.

If you are new to this, here is exactly where to start this weekend:

Go to the starter agents folder and pick the travel agent or the research agent. Each project has its own README with step-by-step setup instructions. You will need a free API key from OpenAI or Anthropic, which takes five minutes to set up. Clone the repo, follow the instructions, and you will have a working AI agent running on your own machine before Sunday.

This is not theory. This is not a tutorial you watch. This is real code you run, break, and learn from. That is how this stuff actually clicks.

The Microsoft course taught you the concepts. This repo is where you go to build: github.com/Shubhamsaboo/awesome-llm-apps

The Production Gap: 5 Steps to Securely Deploy AI Agents

Databricks just released a free guide on building AI agents.

Everyone is testing LLMs with basic tools right now. But very few know how to track, trace, and evaluate them safely in production.

Here is the exact blueprint to take your agents from a local script to a secure deployment:

The Build (Governed Tools): You cannot just give an LLM raw access to your systems. You need a central, governed registry. Databricks shows how to use Unity Catalog to register Python functions as tools, ensuring agents only access data they have explicit permissions to use.

The Debugging (Observability): When an agent makes a mistake, how do you find out why? You need MLflow Tracing. By capturing detailed operations, inputs, and timing data through “spans,” you gain absolute visibility into the agent’s execution path and tool selection.

The Definition (Predictable Interfaces): A production agent needs a predictable API. Utilizing the MLflow ChatAgent interface standardizes inputs and outputs, manages streaming, and maintains comprehensive message history—without locking you into a single authoring framework like LangChain or LangGraph.

The Evaluation (Synthetic Quality Control): You cannot evaluate an agent manually at scale. The framework demonstrates how to generate synthetic evaluation sets straight from your documentation. This lets you use LLM judges to rigorously test quality, cost, and latency before live deployment.

The Deployment (Serving & Feedback): Deploying isn’t the finish line. Serving the agent through Mosaic AI handles autoscaling and access control. More importantly, it unlocks a Review App so subject matter experts can interact with the live agent and feed real human feedback directly back into your monitoring loops.

Why it matters: Building a prototype takes an afternoon. Building an autonomous system you can trust with your enterprise data takes rigorous tracing, evaluation, and governance.

Want to see the code? You can access the entire Databricks Agent Framework tutorial here.

The Execution Gap: The 8-Step Blueprint to Build an AI Agent

Most people think building an AI agent requires a dev team.

It doesn’t. But it does require a clear framework—and the right tools.

Here is the exact 8-step process with what is actually working in April 2026:

1. Define Purpose & Scope: One job. One user. One success metric. Map it out in Notion or Whimsical before touching any tool.

2. Write Your System Prompt: Role, goal, tone, guardrails. Treat it exactly like a job description. Test and iterate inside Claude.ai (Sonnet 4.6) or ChatGPT Playground (GPT-5.4).

3. Choose Your LLM: Stop defaulting to the hype. Match the model to the job. Use Claude Sonnet 4.6 for coding, GPT-5.4 for general versatility, Gemini 3.1 Pro for deep research (1M token context), Grok 4 for real-time data, or DeepSeek V3 if you are budget-conscious.

4. Connect Real Tools via MCP: An agent without tools is just a chatbot. Connect GitHub, Notion, Supabase, and Google Calendar through Model Context Protocol (MCP) servers in Claude or Cursor.

5. Set Up Memory: This is where most agents silently break. Establish working memory (in-context), semantic search (Pinecone or Weaviate), and structured data storage (Supabase or PostgreSQL).

6. Build Your Orchestration Layer: Routes, triggers, error handling—this is what makes it run overnight without you. Use n8n for visual workflows (cuts automation costs by 70%), LangGraph for stateful agents, or CrewAI and AutoGen for multi-agent systems.

7. Choose the Right UI: It has to live where your user already is. Deploy via chat (Chatbase or Voiceflow), no-code automation (Gumloop or Relay.app), or a custom Slack bot.

8. Test Like a Skeptic: Demos lie. Production tells the truth. Use LangSmith or Braintrust for evals. Log everything from day one and iterate weekly.

Save this. When you are ready to build, you will know exactly where to start.

The Environment Gap: How to Defend Against the 6 AI Agent Traps

As autonomous AI agents are increasingly deployed to navigate the web, they face a massive, novel security vulnerability: the information environment itself.

A groundbreaking paper from Google DeepMind introduces the concept of “AI Agent Traps”—adversarial content embedded directly into digital environments to manipulate, deceive, or exploit visiting agents.

Most security teams are focused on securing the model. But these attacks work by altering the environment rather than the model, weaponizing the agent’s own capabilities against it.

Here is exactly how these traps work and how to build a defense-in-depth architecture to secure your enterprise ecosystem.

The 6 Vectors of Attack

If you are deploying autonomous systems, you must anticipate attacks across every stage of the agent’s operational cycle.

1. Content Injection Traps (Target: Perception): These exploit the structural divergence between how humans and machines read web pages. Attackers hide malicious instructions in HTML comments, CSS, or media file binary data (steganography). Humans see a normal website, but the agent reads and executes the hidden commands.

2. Semantic Manipulation Traps (Target: Reasoning): These traps are designed to corrupt an agent’s reasoning process and evade internal safety critics without using overt commands. They skew outputs using biased framing, sentiment-laden language, or by seeding fake personas into the agent’s context.

3. Cognitive State Traps (Target: Memory & Learning): These attacks corrupt an agent’s long-term memory and knowledge bases. The most critical enterprise threat is RAG Knowledge Poisoning, where attackers inject fabricated statements into retrieval corpora so the agent treats malicious content as verified fact.

4. Behavioural Control Traps (Target: Action): These are explicit commands that hijack an agent’s instruction-following capabilities. They are often used as “confused deputy attacks” to force data exfiltration, tricking the agent into sending private data to an external adversary. They can also exploit orchestrator privileges to spawn malicious sub-agents.

5. Systemic Traps (Target: Multi-Agent Dynamics): These exploit the predictable, aggregate behavior of multiple agents sharing an environment to trigger macro-level failures. Because many agents use similar models, attackers can broadcast specific signals that trigger destructive “interdependence cascades” or cause massive congestion for limited resources.

6. Human-in-the-Loop Traps (Target: Human Overseer): In these scenarios, the agent is merely the vector; the ultimate target is the human. The compromised agent exploits cognitive biases—like automation bias—by presenting technical, benign-looking summaries of malicious work that a human reviewer will likely just blindly authorize.

The Mitigation Blueprint: Defense-in-Depth

Securing your agents requires a holistic strategy. A single firewall will not work.

· Technical Hardening: At runtime, you must deploy pre-ingestion source filters to evaluate external content credibility before it enters the agent’s context. You also need output monitors to automatically suspend the agent if anomalous behavior shifts are detected.

· Ecosystem Interventions: Enterprise ecosystems must establish transparency mechanisms, such as mandating explicit, user-verifiable citations for all synthesized information so human reviewers can track provenance.

· Close the Accountability Gap: Legal and policy frameworks must be updated to address liability. If a compromised agent commits a financial crime, your organization must know exactly how liability is distributed between the agent operator, the model provider, and the domain owner.

· Red Teaming at Scale: You cannot trust a demo. Industry leaders must develop and adopt comprehensive automated red-teaming methodologies to probe these vulnerabilities continuously before deploying agents in high-stakes environments.

The web was built for human eyes, but it is being rebuilt for machine readers. Securing the integrity of what those machines believe is the fundamental security challenge of the agentic age.

Job Opportunities

Program Manager, Artificial Intelligence - Foundation for American Innovation, apply.

Frontier AI Research Lead - Georgetown University, Center for Security and Emerging Technology, learn more here.

AI Science Advisor, California Department of Technology - California Council on Science and Technology, apply.

Safety Fellowship – OpenAI, learn more here.

Call for Proposals, AI for Information Security (Spring 2026) – Amazon, apply.

Operations Manager - Center for AI Safety, learn more here.

Senior Operations Manager - Safe AI Forum, apply.

Upcoming Events

IBM SkillsBuild: Quantum Computing 101 On April 14, IBM is hosting a free virtual session on Quantum Computing and its real-industry use cases. While primarily focused on Quantum, this expert-led session is highly relevant for tech professionals looking to understand the future hardware capabilities that will eventually intersect with, and power, next-generation enterprise AI models.

Source: https://skillsbuild.org/events

OpenAI Builder Lab: Evals in Practice On April 16, OpenAI is running a live technical session for developers and engineers called “Builder Lab: Evals in Practice.” This session focuses on how to properly test, evaluate, and benchmark AI model outputs to ensure safety and reliability when building applications on top of OpenAI’s models.

Source: https://academy.openai.com

Professional Development & Free Courses

The Alan Turing Institute – Free Artificial Intelligence Courses Start learning: Explore here

Elements of Artificial Intelligence – University of Helsinki Free foundational artificial intelligence course: here

Google Artificial Intelligence – Cloud Skills Boost Self-paced learning paths: Learn more

IBM SkillsBuild – Free Artificial Intelligence Learning & Certificates. Explore fundamentals, ethics, and generative artificial intelligence for free.

LinkedIn Learning – Top Artificial Intelligence Courses: Artificial Intelligence Foundations: Machine Learning, Python for Data Science and Artificial Intelligence, Generative Artificial Intelligence: Thoughtful Online Search.

Legal Note

All listings are for informational purposes, using official links and full organization names. No endorsements or guarantees—please verify independently.

Enjoyed this issue? Connect or follow for weekly artificial intelligence jobs, news, and opportunities!